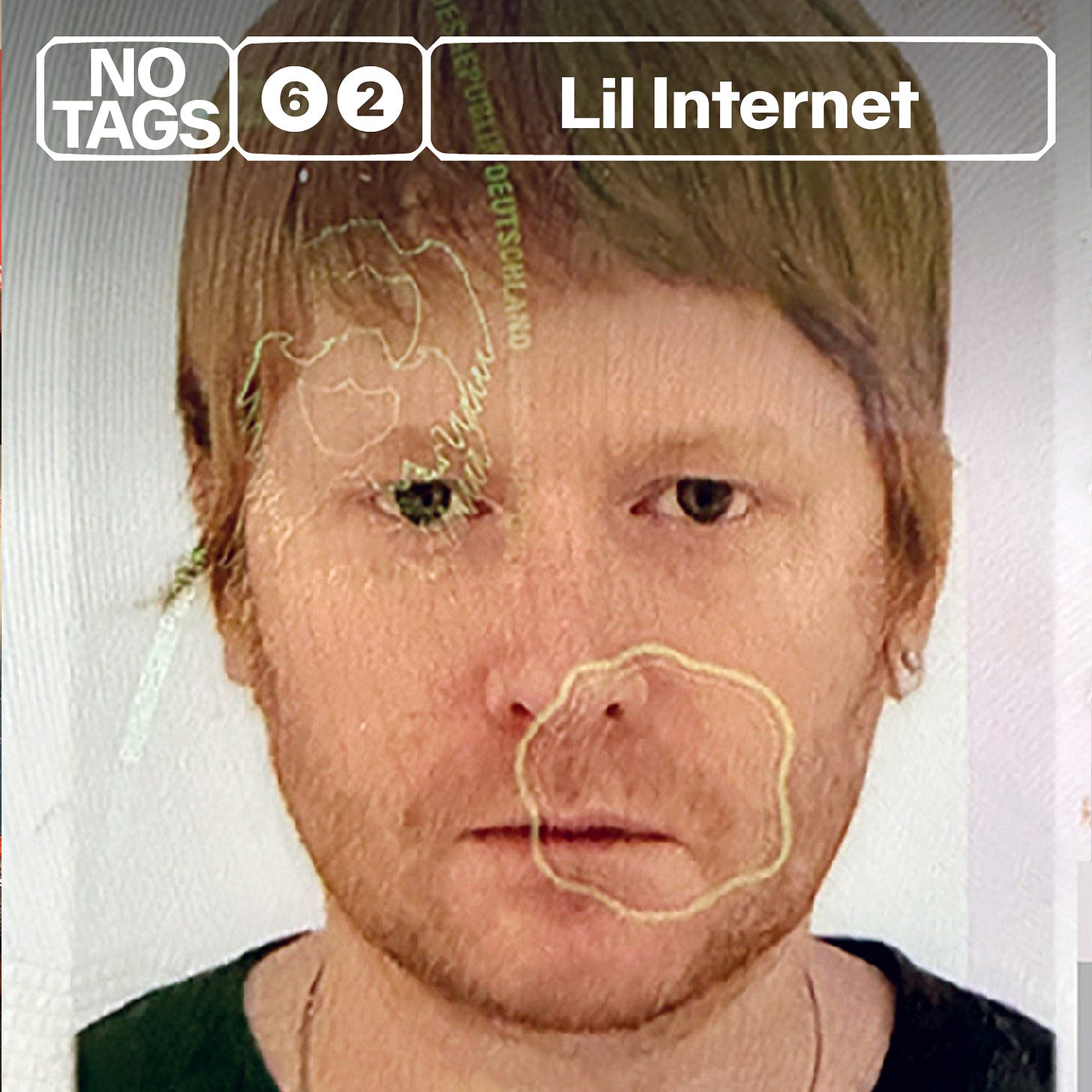

Taganistas may know Lil Internet from various endeavours over the years – his music videos (Beyoncé, Diplo), his high-concept DJ mixes, his ever-present voice on Twitter.

But since 2017 his main focus has been New Models – a website, podcast and Discord that’s emerged as just the sort of music-and-ideas safe space that dorks like us usually bemoan the loss of.

But the reason we finally asked Lil Internet to join us on No Tags is his latest music project – a brand new genre he’s calling gencore (or Generative Hardcore). Showcased across two mixes released in 2024 and 2025, gencore tracks are completely AI-generated from the software Udio, and adhere to the following three rules:

All gencore vocals must be forced hallucinations, output by the model in ‘instrumental’ mode (as a distinctly inhuman glossolalia or phonetic ur-language).

Whoever creates the gencore should not personally be able to say ‘this sounds like X artist or genre’ (except gencore).

To recreate a gencore track using traditional (non-generative) means must be very difficult, very expensive, or simply impossible.

Perhaps most controversially, Lil Internet proposes that because gencore is ‘made in the tradition of sample-based, breakbeat driven dance music’, it arguably marks an evolution – or maybe even the endstate – of what music critic Simon Reynolds named the hardcore continuum.

He opines: ‘Could one even argue that the big AI companies – sampling everything we type, everything we upload, everything we do – follow the same protocol? Perhaps the hardcore continuum has expanded from something we listen to into something we are living through.’

Obviously this was catnip to us – especially as we spent an entire episode last year arguing that AI-generated Japanese city pop must be one of the four horsemen of the apocalypse.

We spent a great hour with Lil Internet talking about gencore and the AI music landscape, how Udio’s quirks give AI music ‘soul’, the moral boundaries of AI music, and Bandcamp’s recent AI ban.

And for the first 30 minutes of the show, we each pick a Winter Olympics sport, compare our gunmanship, and offer some recommendations, including records by Otto Benson and 6amsunset, They Are Gutting a Body of Water’s recent live residency at the ICA and – speaking of fluids! – Kristen Stewart’s staggering directorial debut The Chronology of Water.

Before that, the usual plugs. Our second book is out now – grab it direct from our Shopify page or various IRL bookshops and record stores.

We have a paid tier. We’re not big on bonus content as we’re stretched enough doing this around other jobs, but subscribers do get discounts on our book and live events. More importantly, you get to support an independent podcast that’s truly DIY: we research, record and edit this thing ourselves, and anything we outsource, we pay for out our own pockets. We do No Tags for the love, but if you enjoy the show and want to show a little love back, you can do so for a mere £5 per month.

If you want to support for free, please leave us a nice review on Apple Podcasts or simply tell a friend. It really helps.

TL: It would be good to start off with an outline of who you are and what you’ve done musically.

Lil Internet: I grew up in Virginia and have been DJing since I was 14. I mowed lawns to buy Technics 1200s and I was making drum and bass on freeware in the late ‘90s, like the first Fruity Loops and Cool Edit Pro. I went to college in Boston and at that time Vitalic’s Poney EP changed my life. I still think it’s one of the greatest EPs ever made. You really feel the exhilaration and paranoia of the Y2K era in that record, and it especially bangs again right now because it feels like we’re living through a bit of a similar time.

I got into electro and then more open-format DJing, but there was no scene in Boston for this. I was throwing my own party for a long time while making edits for DJ sets and stuff, all through the blog house era, as it’s now called. I met Azealia Banks on SoundCloud, just through messaging, and then produced ‘Yung Rapunxel’ and ‘Heavy Metal and Reflective’ for her. We toured a bit in the UK and Ireland, I did Glastonbury with her, but I was also doing music videos and everything all swirled together.

I just crashed out, really. I had always had a day job and I couldn’t really make the transition to being either a working producer, because I worked too slow, or a working video director, because my process was too idiosyncratic. I came up experimenting with newly available technology in the context of the internet, when people were still figuring out how it was going to work [but] the industry still had a very specific way of doing things that didn’t really work with the way I did them.

After a couple of dark crashout years I ended up in Berlin in 2015, working in a creative capacity with Boys Noize, who I still work with, and I’ve been in Berlin since. That’s my music career. In terms of conceptual mixtapes, before the AI ones, there’s one I did that people still bring up now, which is the DMX Prayer Monument. DMX had a skit on each of his albums that were spoken word prayers, so I put those over ambient tracks and they decay over time – while he was simultaneously decaying as a person. It’s like the Disintegration Loops of DMX, or something. Rest in peace.

TL: I have such a distinct memory of those DMX prayer interludes. I would’ve been around 13 when the third DMX album [...And Then There was X] came out. My stepmum had given me a lift somewhere and I wanted to play the CD in the car. We listened to the whole album and when it got to the prayer interlude I instinctively skipped it, and she asked, ‘why did you skip the prayer?’ She’s Christian and was like, ‘I’ve just sat through an hour of slurs for you to skip the prayer?’

LI: They’re really special, DMX was such an incredible force. I’m very happy that that mixtape has endured in some way. Every once in a while a random person will come up to me at a party and mention it.

TL: I was really intrigued by what you said about your way of working, in terms of new technology, the possibilities of what you could do online, and the way that clashed with the industry’s usual existing way of working. Were you talking specifically about music videos there?

LI: It was both, to be honest. When I did the Azealia Banks tracks there were a lot of people interested in doing sessions and I’d come with one track I had been working on and was really excited about. I quickly figured out you’re supposed to bring like 50 tracks. It’s not ever how I worked. I never learned an instrument, so I always had a hard time writing toplines or melodies.

All of my tracks, especially those Azealia Banks tracks, came from the ether. Oftentimes a track would hit me at two in the morning and I’d have to wake up and get it down and then I would make something good. If I didn’t it would be gone. I couldn’t just sit down and make tracks. Something had to emerge from my non-conscious and then I could work on something. So being a producer wasn’t going to work.

I got into music videos because I knew Diplo from music. He wasn’t a celebrity yet and he asked me to do the video for ‘Express Yourself’ with Nicky Da B from New Orleans – also rest in peace. That blew up when the United States had this whole twerking craze, where they were talking about on the news. That video got really big, Beyoncé saw it and then she asked me to do a video for her.

The Beyoncé video was literally the second or third music video I’d ever done and I was just forced into it, thrust into this world of people wanting to rep me and pitching on really big videos. I had really never worked! I went to film school, but I rushed through it in three years. I was doing audio and film and was really more focused on audio. I hadn’t been on a real video set ever or anything, so I had to fake it and try to figure out in real time how it works to actually shoot a music video.

I would go with two other people, a producer and a cinematographer. For ‘Express Yourself’, we probably shot for 11 days in New Orleans, staying in a cheap motel. That’s what I did – I spent time at a place shooting, shooting, shooting, then spent a ton of time editing, editing, editing. There’s a lot of resonance between that process and working on music for me, because it’s all about intuiting rhythms and how the different shots make you feel in the way that different timbres or sounds make you feel. [But when I] tried to work commercially as a job, it was really hard for me to go from three people for 10 days to 20 people for one day. I just couldn’t really figure out a way to pull it off.

But in a way, I’m glad it didn’t really work out because the ground is moving so rapidly on all of these things, especially in music videos. I see crazy talented people posting their rates on Instagram and it’s like $1,500 for a day and the edit. That’s crazy! You have to shoot 100 videos in a year to be barely middle class in the United States.

TL: You also made a radio play about Elon Musk. I’d like a moment for that.

LI: Radio plays are something of a forgotten medium. For some reason I had gotten into listening to old radio dramas from the ‘30s and ‘40s or something. Once we started doing the podcast I wanted to play around with this format and see what I could do with it. Today I rarely encounter it, though I just realised that, of course, there’s that Urbanomic book Sonic Faction, about radio dramas Mark Fisher and Kode9 were working on. Apparently Hyperdub even has a sublabel just for audio essays.

TL: I think it’s called Flatlines.

LI: Yeah, Flatlines. The Elon Musk one is just ridiculous, it’s very abject and puerile and I’m very proud of the density and velocity of those elements in it. It’s hard to explain, it would sound dumb to explain it, but it exists, it’s 30 minutes long, it’s a fully scored and built out world. It ends with the IRS blowing up a spaceship full of clones.

CR: Could you tell us a little bit more about the sort of creative and intellectual arc that you’ve been following and how you came to be part of New Models?

LI: I had always read a bit of media theory. One time I was in an occult bookshop and I just randomly picked up Hatred of Capitalism, which is a Semiotext(e) collection of essays. To be honest, I was really a party bopper and a mess, I didn’t have friends who were into that kind of stuff. But through Twitter my now-wife, Caroline Busta, asked me if I wanted to write something for Artforum when she was the associate editor . I wrote it and then she said the copy was really, really good. Later on she also moved to Berlin to work at Text zur Kunst and asked me to write something for them.

Through Carly I started focusing a bit more on writing, or being able to articulate these more critical or theoretical ideas about how I was seeing new media and culture and new technologies operating and how they’re changing us. In 2017, Carly, myself and Daniel Keller – although it’s now just Carly and I – started New Models. At first it was just going to be an aggregator. We took the layout of Drudge Report and borrowed that model, because their code basically hasn’t changed since 1998. It was still the number one news site for almost 20 years, so there was something very structurally effective about that website in a simple way.

We were thinking a lot about algorithms at the time, about the physics of online platforms and how their UX and incentives affects behaviour, or operates on viewers in a way sometimes you aren’t even conscious of. We figured there was some black magic in the Drudge Report, so we borrowed that structure to make an aggregator about media theory and cultural criticism. Very quickly we started podcasting because I knew how to do it, so the podcast then ended up becoming the main thing.

CR: The interesting result from New Models is that it’s not just a website or a podcast, it’s actually a self-sustaining community of people who are interested in the same things. It seems like a really appealing model for a functioning community of friendly-but-critical people pushing each other to think a bit harder.

LI: Part of founding New Models was this realisation that I knew a lot of really smart people in music, and people who were getting more interested in media theory and the academic side of cultural criticism, just because of how much it was affecting their day-to-day life and the media industries that they worked in. But it really wasn’t that easy to access, you had to dig for it.

I knew that if you basically bridged those two spaces with a bit of aggregation, it made it so that smart people working in media industries didn’t have to dig through academic journals or flip through 50 pages of essays on gesture in 19th century painting to get to one that’s talking about, say, how memetics is changing politics. The audience was there for it.

I think that ended up turning out to be true – our audience spans a lot of people from creative industries and people in more academic and intellectual spaces, as well as the art world and some people who didn’t even graduate high school but are just savants at the internet. I feel really good that we were able to triangulate that audience correctly and actually reach them.

TL: We should talk about Illegal Generation, which is a pair of mixes you’ve made of something you’ve coined gencore – ‘Generative Hardcore’ – which is all AI-generated music you’ve made on Udio. How and when did you start experimenting with AI-generated music?

LI: I started playing with AI-generated music in early 2024 because it was there and it’s miraculous. As I said, I never learned instruments, but I’m a sucker for beautiful chord progressions and I really like melodic music in general but I could never write it. AI is just so good at chord progressions and melodies, so it was thrilling. One thing I learned is that the slightest expression of agency, even if you just clicked one button, is enough to make you feel like you have some ownership over the thing that results. Obviously prompting is a lot of work and there’s a lot that goes into it. I got the feeling of creating something that I’ve always wanted to create in some regard.

I quickly decided that what I want to do is make music that could only be made with AI. I wasn’t at all interested in making music that already existed with AI, so I really leaned into the weirdness and the artefacts and it became my hobby for the next few months. Eventually I ended up with 20 or 30 tracks – after thousands of generations. Generative AI has that really toxic slot machine mechanic, because you’re clicking, you’re waiting, maybe the reward is good, maybe it’s not. It totally hacks your brain, it sucks. But by the end I had 20 or 30 tracks I thought were really cool and sounded legitimately interesting, so I decided to put them all together into a mixtape, and that was Illegal Generation Volume 1.

It was a concept tape about a future where AI music is banned and the internet can detect if you’ve uploaded any AI music – which is oddly prescient now! – so gencore had to be distributed on cassette tapes. The quality of the model at the time was kind of poor, it did not sound very good, like a 96kbs YouTube rip or something, so I put cassette emulation plugins on it to make the poor quality seem intentional.

CR: You’ve ended up with three rules of gencore. I’m interested to know at what point the rules emerged and you started using them, because you couldn’t have started off with them.

LI: The rules were definitely retroactively written, but I had the feeling from the beginning that this was what I was aiming for. The three rules of gencore are, one, it shouldn’t sound like any artist that you can name, it shouldn’t be directly emulating something that exists or sound too close to something that exists.

Two, all the vocals must be hallucinated, like AI glossolalia. What I always did was generate them in instrumental mode, but put a lot of prompts for vocals so the AI would just hallucinate vocals. It would be these strange voices speaking total gibberish, sometimes it would be Spanish gibberish, or English gibberish, but none of the words had any meaning. That did not exist before these AI models, so I wanted to really lean into that. They end up a bit wilder because they’re purely emergent, they’re not supposed to be there. They’re not refined or sculpted by any parameters that are meant to make vocals better, to follow your lyrics or whatever. They just come out of the music.

The third rule is that the result must be extremely difficult, expensive, or impossible to recreate with traditional music production. Rules two and three I’ve pretty much stuck to, one got a little bit weird because after I released the mixtape, or while I was putting them all together, this Galen Tipton and Death’s Dynamic Shroud album, You Like Music, came out. It is the closest thing I’ve heard to what the general sound I was getting out of the models is, so there’s a bit of a weird convergence there.

CR: I’d like to know a bit more about what the prompt engineering actually entailed: how you were speaking to Udio and whether there were ways that you learned to get the best results.

LI: You wouldn’t be able to reverse engineer the prompts listening to the music. A lot of it was about injecting randomness, or getting the model to get outside of these troughs, these centres of gravity that the models tend to get pulled into. When they do, you get into more trope=y territory, or into a more regular, generic sound.

If you [prompt Udio to create] ‘solo breakcore drumming’, which doesn’t really make sense, it would actually get the drums to go really wild because it’s triangulating, in multi-dimensional space, a solo live drummer and breakcore. That would get it to alternate the patterns really quickly, because drum solos aren’t generally following a 4/4 metronome. Sometimes you’d just throw a genre, like Bollywood, in there and see what happens. Sometimes there’d be a particular sound I’d want and I’d try to get [it to create], but that didn’t always work.

What was most important was the feedback loop of trying things and hearing what the machine changed because of that. That directed the outcome more than the actual meaning of the words. You might end up requesting menacing, uplifting solo breakcore drumming, Memphis reggaeton, Bollywood singing, Tuvan throat singing and post-industrial dembow – and the output wouldn’t really sound like that, but you’d end up understanding what all these different phrases or words pulled the model towards. Eventually you’d end up with a string that works.

CR: How much of the output was beyond what you felt you could make sense of? People talk a lot about AI hallucinations and the kind of spookiness that neural nets can spit out – how much of what you were getting felt inexplicable, or genuinely new or different?

LI: It was more of a question of incoherence. Oftentimes, because the prompts were so incoherent, you would not get very coherent results. Sometimes you’d get things that would have a bit of something cool in it, like a couple of bars that are really cool, or one piece of vocals. Maybe you’d cut that section out and then generate off of it. You really had to ride this line between coherence and incoherence.

The other very interesting thing about the models is that they would spit out sounds I had never heard before, in a way that was sometimes the most exhilarating part. It’s literally timbres you’ve never heard before. There’s no soft synth that you can make this sound on. It emerges out of the triangulation of 800 points in machine space. But it’s really about trying to still get coherent music out of prompts that are engineered to push the model towards incoherence. That’s how you would get something interesting.

TL: The intro to Illegal Generation Vol. 2 proposes AI music as the logical end state of the hardcore continuum. You say: ‘Music has always been made using the latest technology and stolen data, uncleared samples. In this sense, gencore is part of the natural evolution of what music critic Simon Reynolds named the hardcore continuum. Could one even argue that the big AI companies sampling everything we type, everything we upload, everything we do, follow the same protocol? Perhaps the hardcore continuum has expanded from something we listen to into something we are living through.’

Whenever I have these conversations about AI-generated music, I often find myself coming back to the same point, which is that if you have an issue with this, then you also have to have an issue with sampling – not to mention everything that comes between the two, like generative MIDI programs or whatever. Do you think that’s fair?

LI: I think it’s fair to a certain degree. In Illegal Generation Vol. 2, there’s this refrain throughout the mixtape where the story of Gregory Coleman, who’s the drummer who played the Amen break, is told. He never got paid anything for playing literally the most used breakbeat of all time. Actually, he was living on the streets when he died. He’s this absolutely tragic casualty of advancing music technology. In the mixtape there’s this line that we’re all becoming Gregory Coleman, there’s this giant extractive machine that is taking the product of everything we’ve input into the internet and using it to drive this greater technological process forward. I do think that it’s strange that people who are adamantly anti-AI aren’t asking for jungle to be removed from Bandcamp. It really was literally computers and stolen data, it was all records made out of other records.

I think where the distinction is, though, is that AI music models aren’t sampling songs. The songs that they’re trained on do not exist inside that model anywhere at all. The way these models work is through neural networks. It’s closer to the way our own brain works than it is to anything else we have a reference for previously. The way the models work is kind of like the way we understand a genre. If someone says ‘make up a shoegaze song in your head’, you can imagine the fuzzy characteristics of a shoegaze song. That’s kind of the same thing these AI generative models are doing.

What they do when they’re trained on a song is they make millions of connections between all of the aspects of that song and all of the other music and its training data, so it understands the patterns of associations and relationships between everything happening in the music. That’s also how we understand shoegaze. It has to have this kind of timbral characteristic, this kind of guitar, this kind of vocal processing, so it’s really doing a similar thing to that.

I always think about the ‘Blurred Lines’ case, with Pharrell and Robin Thicke versus the Marvin Gaye estate, which I think is one of the worst rulings in music copyright history. They were found guilty of stealing a vibe, right? They had an idea of the vibe of a Marvin Gaye song in their head and made a song that really shares nothing with the Marvin Gaye song and they still lost the case. The ‘Blurred Lines’ case basically makes ‘type beats’ illegal – and all AI does is make ‘type beats’, even if that’s breakcore-deconstructed-reggaeton-Bollywood-Baltimore-Memphis-Club-Balearic-kabuki-type beat.

This is the ontological difference between what the models are doing and sampling. I tend to bracket that conversation from the grander conversation about acceleration and AI and what it’s going to do to society. That’s a conversation I love to have and I am afraid of that future, but I also think it’s a much bigger and more complicated conversation. When we’re looking at AI-generated music, I find this prohibitionary, absolutist reaction to it to kind of be misguided.

TL: I was intrigued by something you tweeted a little while ago: ‘Suno is now 10 times superior to Udio in all ways except the most important one. Suno sounds perfect but you feel nothing. Udio sounds like trash but gives you frisson. Suno remastering scrubs the ‘soul’ out of Udio songs, so I think the ‘soul’ is all weird harmonics and psychoacoustics.’

The idea of imperfections and these weird ghosts in the AI generative machine giving the music soul is really interesting.

LI: The reason I really loved Udio, even though it sounds objectively worse than Suno, is because you would have all of these harmonic fragments. It’s synthesising a synth line out of a million synth lines. There’s something psychoacoustically weird going on with these AI outputs, where sometimes, if you listen to it on different headphones, you’ll hear a certain line, or pad, or texture distinctly differently, sometimes at a different volume. A totally different discernible layer will suddenly reveal itself.

It suggests that there is another rabbit hole opening up of what a producer can do when making music. This is all speculative, but could you make a song that sounds different to two different people? Can you make a song that sounds different almost every time you listen to it because of the timbral shifts, depending on context or position in a room or what you’re listening to it on? The weirdness of that in particular was distinct enough to make me think maybe there’s still something else here sonically to unlock that we haven’t really had access to before.

We’re in this AI music winter right now, because UMG and Warner sued Udio and Suno respectively. Udio is tied to UMG and Suno’s tied to Warner. UMG has been notoriously harsh in its position on AI music and they killed the ability to download anything from Udio. It’s now technically copywritten by UMG and you can’t upload audio generations anywhere. Apparently you can still download things on Suno, it seems they’re a little bit looser at Warner – but who knows what the future is.

Right now, open source models haven’t caught up. Even with Suno, I haven’t been able to crack it to get it to do truly weird stuff that I want it to do. There’s a chance that this Udio moment was just this moment in time and we’ll have to wait who knows how long before it makes itself available again, or something similar does. It was the first time where it suggested to me that there’s a whole other realm of sound and composition or production that we haven’t been able to access yet, and maybe AI music models can unlock that.

TL: Talking about sample-based music often takes us back to questions about copyright, and it often highlights just how nonsensical and impractical a lot of copyright law actually is. I’m curious about how AI affects this – is there a feasible future where AI music destroys the logic of copyright for good?

LI: I don’t really have a position or prediction on it. I think things are definitely going to get very weird. I often bring up the example of Playboy Carti, where he wanted to do something different than [his 2020 album] Whole Lotta Red, so his fans decided to just AI-generate more Whole Lotta Red. Now there’s this guy called TWXN, who’s some white kid who hides his face but is doing this Opium-core pantomime of Carti with AI-generated Carti vocals. If the artist is not doing what the fans want, the fans will just clone the artist basically, with AI, to make the artist continue to make the music that they want. That’s a really tricky, weird thing that’s going to emerge, maybe more than copyright. What happens for artists when the fans have the option of just making more of the kind of music they want from you if you’re not willing to deliver it to them?

Now Udio is with UMG, they say that what it’s going to be for is for fans to engage with and remix their favourite artists’ music. It might even be the case that fans might generate the artist’s next hit that they then record for real or something.

TL: I can totally see that.

LI: You have this cybernetic loop between artist, fan and machine. You have a built-in focus group. This is tangentially related to this conversation, but I think it’s also just important to keep in mind because it exists at the scale of all of these things like AI projects and models occupy. Anna’s Archive, the huge pirate library of books and academic papers, I think the largest in the world, just announced that they had successfully scraped the entirety of Spotify, something like 300 million songs, all in 320kbps. All of Spotify. What happens when you train an AI model on the entirety of Spotify at high fidelity?

Music is absolutely going to change. I think it’s going to be bad for producers, but I do not think AI is going to be the end of the artist at all. Actually, there’ll probably be more artists, in terms of musicians, than ever, but again it’s just an acceleration or an intensification of something we’ve seen already happening. Think about rap artists who bought a beat for $300 and it became a massive hit. It’s going to be a very different landscape for music in the next few years with a lot of weird new combinations of fandom, AI and artists.

CR: The Anna’s Archive story is funny but also darkly ironic, because when Spotify first launched, they didn’t have the rights to the songs either. Spotify was built on a pirated collection of music right at the beginning, and that was how they invented the user experience. So for something like Anna’s Archive to come along and be like, ‘we’re just going to take Spotify right back into the pirated waters’ – I find amusing, even if it’s obviously chaotic.

TL: I think my feelings on AI generative music often come back to intention. I don’t have any issue with it as a tool. I think like all music, some case studies are going to be interesting and some won’t. But when you get a situation like people flooding YouTube with replicas of Japanese city pop records, to me that’s when it crosses a moral line into a form of cultural erasure or cultural retelling that I find incredibly uncomfortable. I was wondering if you have a line, or moral standpoint, on when AI music is good or bad?

LI: I think those things are disturbing and I think it’s going to happen for photos and for history and for moving images. We’re rushing towards a very, very crazy kind of ontological fracturing. I look at all this stuff rather soberly because I’m just too black-pilled to think you can roll it back. There’s a huge spam problem with AI, of course, but short form video itself, the entire physics of TikTok, is just this brain hacking thing without AI content, right? It’s an intensification. There’s going to be a lot of bad and disturbing and terrible uses of AI-generated everything.

I’ve been starting to think of AI, especially on the scale it occupies and the fact that it does have agency in some way and it is intelligent, as if we conjured a God on earth with a graphical user interface for it and we don’t have any rituals to engage with it. Never before have we had a God without clear rituals for correctly engaging with it. Rather than some prohibitionary, ‘oh we’ve got to stop AI and roll it back’ [reaction], which I don’t think is possible, I think we need to start seriously thinking about the rituals and protocols. How do we make this help us? How do we protect ourselves from this? How do we protect the earth and maybe all organic life from this? That is a path, I think, where there’s more possibility for success than hoping the whole thing will go away, or can be undone.

CR: Increasingly I’m seeing this argument that even if AI is taking over and automating so much of our lives and our jobs, or even automating our thinking processes and our creative processes, that a by-product will be that genuine, authentic human ideas and human intervention will therefore become more valuable – almost like artisanal products or craft products.

I definitely think that sounds like copium for humanities losers like me, who thought that they had made the biggest mistake ever when they realised that they couldn’t code! But now it’s like, great, maybe writing a prompt is the thing that you really need to be able to do.

LI: Podcasting is wildly resilient. We’re all extremely lucky, for real.

CR: What do you make of this idea that human input will remain the most valuable, or even gain in value?

LI: I think the craft analogy is right. A craft IPA is not Budweiser, it’s kind of a niche and those niches can thrive. People can make a living off of them and I absolutely think that will exist and there’ll be plenty of examples of that. I think there’s going to be a lot of organised renegotiation of people’s relationship to network technology in general.

I also think, even if the music is AI – like AI music without a human figurehead, without an artist – how many SoundCloud rappers are basically this, like human avatars for these media assemblages? I think that’s very much going to matter. My two-year-old is really into SOPHIE now, she listens to ‘Immaterial’ over and over. And I realised: this song hits different because SOPHIE died. There’s a gravity and intensity to it now because of this, but an AI artist can’t die. The affective charge of the music can’t change depending on the context of that artist’s life or death. I think human artists will absolutely still have a very large role. I don’t think there will ever be a human-less pop star.

CR: Even with gencore, ultimately the reason that I find it interesting, as well as the fact that I did genuinely listen to it and find it listenable, is your intention. It’s the framing that you’ve given it, it’s the fact that you’ve explained what you were trying to do.

More broadly speaking, what I know about you as a creative person, as someone who thinks about these things and the fact that you’re experimenting in this area, all of that feeds into it and makes it interesting. If it doesn’t have any of that cultural metadata, for me it just doesn’t have that kind of affective value.

LI: I think people have been tricked by really utilitarian playlists like ‘Working Out’ or ‘Coffee Bar’ that the context around individual songs doesn’t really matter, that songs are just sounds that make you feel a particular way. As soon as you get above the £3 bottle of wine level and start to actually pay attention to your feelings and a little more of the subtlety, a little bit more of what’s going on, when you’re listening to music or following an artist, people are going to realise that context is absolutely important. You can’t just excise it and end up with something that’s qualitatively as good.

TL: In the last week, Bandcamp has banned AI music from the platform – what are your instinctive thoughts on this?

LI: It bothers me less that they did it than the conversation around it bothers me. I’m just so over culture wars playing out on social media, it’s a really bad place to discuss any issue of consequence. I think Bandcamp probably did it because it’s really hard to manage the AI spam that’s being uploaded. I also think Holly Herndon is right in her reply, which is questioning why she, as an artist who really deliberately uses AI in novel ways and is part of a bigger concept, would be disallowed from that platform?

Of course, it will probably require a human to discern those things and that’s a logistic impossibility. I can’t blame them. It’s not ideal, but I could easily imagine AI uploads being a huge drag on their logistics and ability to operate, so it makes sense. I don’t agree that anything using AI should be banned from all platforms at all, but I understand why Bandcamp did it.

CR: You can’t necessarily stop AI from appearing where it shouldn’t do, but you can’t ban it either, because then that bans the creative possibility. It’s just going to be a mess, isn’t it?

TL: I don’t understand how Bandcamp are going to enforce it.

LI: I’m not quite sure of the tech behind it. I would say generally, overall, there’s probably going to be more bad things than good things, but it’s going to be interesting and there’ll be a very different world at the end of it.

CR: So we ask everyone who comes on the show to recommend us a film. We’ve become real Letterboxd dweebs in our elder years.

LI: I would recommend Sirāt. First, it’s very beautiful, it’s very synaesthetic, the score and the sound is incredible. The environment is empty and menacing and essentially you’re following these people who tried their hardest to live outside of the larger machine, so to speak, and pursue a life of collectivity and hedonism. Eventually the machine, or its traces, its exhaust, catches up with them.

It’s very much an apocalyptic movie, and very much a rave movie, although it’s about the free tekno scene. It’s very much 2026 vibes, definitely for the worse, but very much the vibe of this time. Listen to Vitalic’s Poney EP afterwards. That’s what I’m playing at the end of the world.